Cooling

Cooling Built for AI's Hardest Workloads

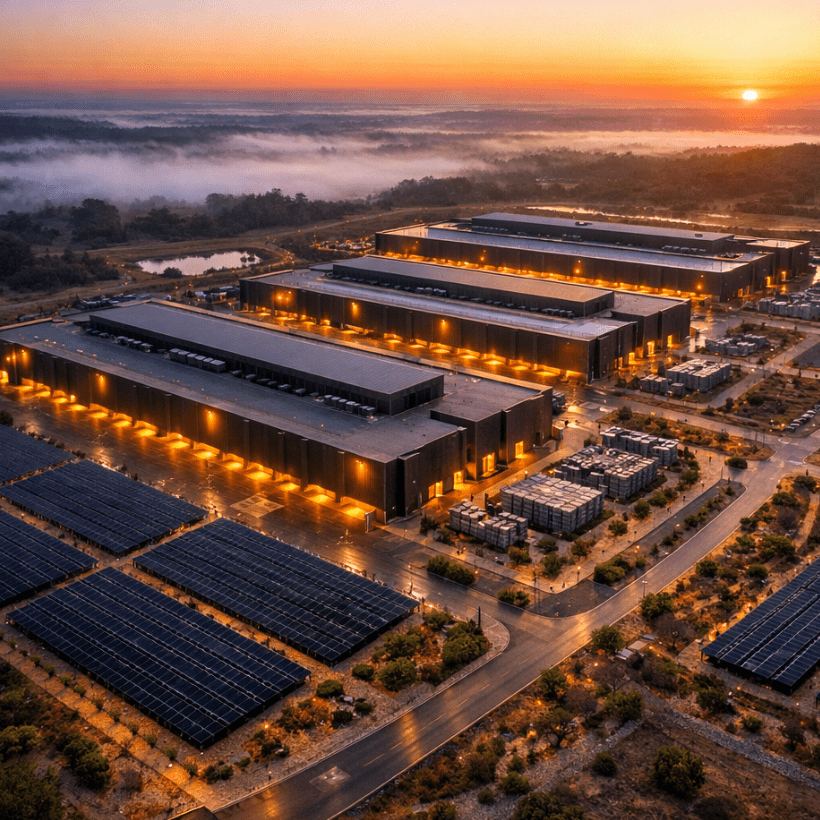

Heat is the silent killer of artificial intelligence. Every GPU cluster running a large AI model generates heat at a scale most people never think about and if that heat isn't removed fast enough, chips throttle, performance collapses, and hardware fails. The more powerful the AI, the more dangerous the heat. At DST Data Centers, cooling isn't an afterthought bolted onto the end of a design process. It is one of the first things we engineered, because at 1,000 kilowatts per rack, air conditioning simply doesn't cut it anymore.

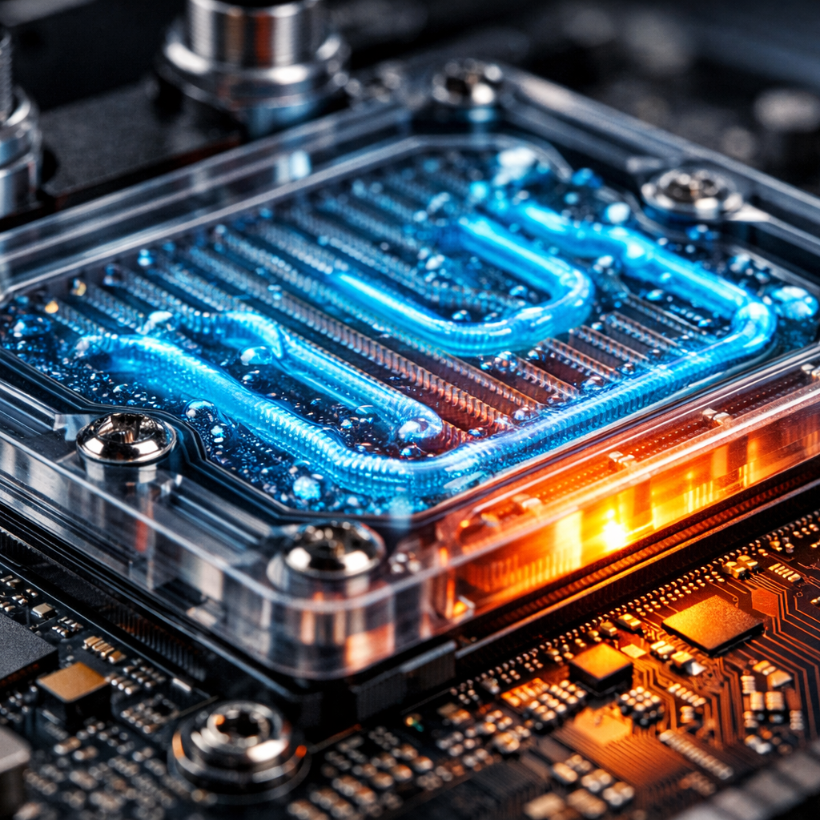

Endeavour uses direct-to-chip liquid cooling, a technology that carries heat away from the processor itself, before it ever has a chance to build up in the room. Cold liquid flows in, hot liquid flows out, and your GPU runs at full performance continuously, not throttled, not degraded, not interrupted. Combined with our closed-loop chiller system that uses almost no water, and our Computer Room Air Handling units positioned for maximum airflow precision, Endeavour delivers the most thermally efficient AI computing environment available on the African continent and among the most advanced anywhere in the world.

Feature Process

We begin by mapping the exact heat output of your workload, how many racks, what GPU model, what power density, and how continuously they will run. AI training jobs run at full intensity for days or weeks at a time. We design your cooling environment for sustained peak load, not occasional bursts.

Based on your thermal profile, we configure the right combination of direct-to-chip liquid cooling, liquid-to-liquid heat exchange, and CRAH unit placement for your specific data hall module. Every element is sized precisely, no over-engineering, no under-provisioning, no guesswork.

Before a single GPU goes live, we run full thermal commissioning across your allocated space. We test every cooling loop, every chiller circuit, every airflow path. We validate that your environment can sustain maximum rack density continuously before your workloads ever touch it.

Once operational, our on-site engineering team monitors thermal performance around the clock. As you scale your compute footprint, we scale your cooling capacity with it, module by module, phase by phase, with zero disruption to running workloads.

- Understand Your Thermal Load

- Design Your Cooling Architecture

- Commission and Test

- Monitor, Maintain and Scale

Cooling Infrastructure Outcomes

Here are six things our customers gain when they choose Endeavour's cooling infrastructure for their AI operations:

1. Full-Speed GPU Performance: Direct-to-chip liquid cooling keeps processors at their designed operating temperature continuously. No thermal throttling. No performance degradation. Your GPUs run at 100%, exactly the way the manufacturer intended for as long as your workload demands.

2. Ultra-High Rack Density Support: Our cooling architecture supports racks consuming from 250kW all the way to 1,000kW. That is 50 to 100 times the density of a standard data center. If your hardware vendor is building the next generation of AI accelerators, Endeavour is already ready for them.

3. Near-Zero Water Consumption: Our closed-loop chiller system recirculates the same water continuously, with almost no evaporation or discharge. Our Water Utilization Effectiveness (WUE) target is near zero litres per kilowatt hour, saving billions of litres of water annually compared to traditional cooling tower designs. In a world increasingly conscious of water scarcity, this is not just responsible, it is a strategic advantage.

4. N+1 Mechanical Redundancy: Every cooling system, every chiller, every pump, every cooling distribution unit, has a fully operational backup standing by. If any component requires maintenance or experiences a fault, cooling continues without interruption. Your workloads never notice.

5. Precision Airflow Distribution: Each data center module features Computer Room Air Handling units positioned in two galleries on opposite sides of the hall, creating a controlled, highly efficient airflow pattern that eliminates hot spots and ensures uniform temperature across the entire computing environment.

6. Designed for What's Coming: The GPU chips being released in 2027, 2028, and beyond will generate more heat per unit than anything available today. Endeavour's cooling infrastructure was not designed for yesterday's hardware. It was designed for the next decade of AI compute, so our customers never outgrow it.